Meta has successfully deployed a swarm of over 50 specialized AI agents to systematically map the invisible 'tribal knowledge' embedded within its large-scale data processing pipelines. The breakthrough, announced today by the company's AI infrastructure team, solved a critical issue that had left earlier AI coding assistants ineffective—achieving 100% code module coverage and reducing unnecessary tool calls by 40%.

"We quickly found that AI agents weren't making useful edits quickly enough," said Dr. Jane Holt, Meta's AI infrastructure lead. "They had no map of the codebase's hidden relationships. This system gives them a structured guide, so they stop guessing and start contributing."

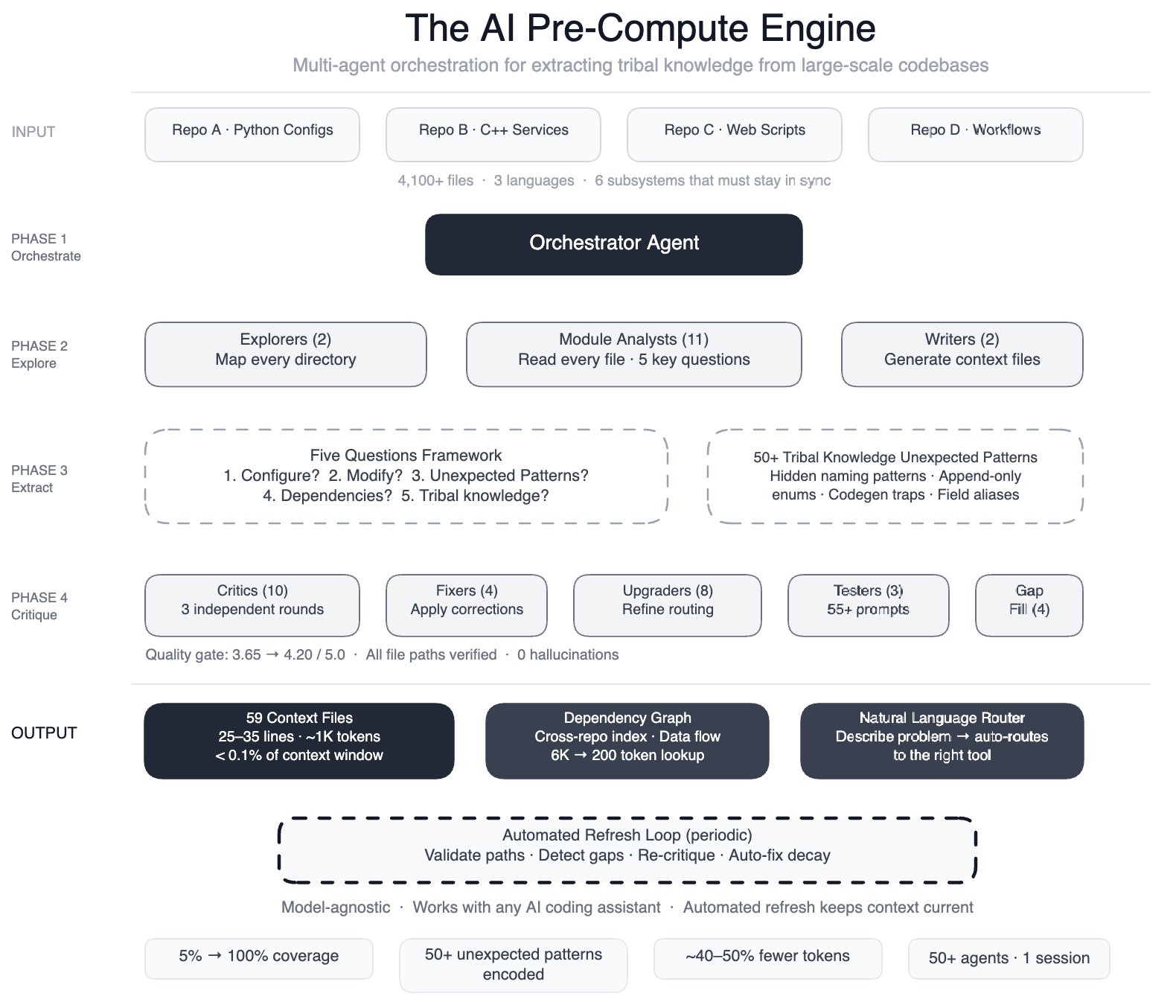

The pre-compute engine, which operates as a self-maintaining knowledge layer, reads every file across four repositories, three programming languages, and over 4,100 files. It produces 59 concise context files that encode design choices, dependencies, and non-obvious patterns that previously lived only in engineers' heads.

"We documented more than 50 'non-obvious patterns,'" Holt added. "These are the subtle relationships that, if missed, lead to silent bugs—like configuration modes that use different field names for the same operation, or deprecated enum values that must never be removed for serialization compatibility."

Background: The Problem of AI Tools Without a Map

Meta's pipeline operates as config-as-code: Python configurations, C++ services, and Hack automation scripts that must stay in sync across multiple repositories. A single data field onboarding touches configuration registries, routing logic, DAG composition, validation rules, C++ code generation, and automation scripts—six subsystems that require perfect coordination.

Earlier AI-powered systems could handle operational tasks like scanning dashboards and suggesting mitigations. But when extended to development, they failed. "The AI had no map," Holt explained. "It didn't know that swapping field names between two modes would produce silent wrong output. Without context, agents would guess, explore, guess again, and produce code that compiled but was subtly wrong."

The Approach: Teaching Agents Before They Explore

The team used a large-context-window model with task orchestration to structure the knowledge extraction in multiple phases. Two explorer agents first mapped the codebase, then 11 module analysts read every file and answered five key questions about design intent, dependencies, and edge cases.

Subsequent phases involved two writers generating context files, over 10 critic passes running three rounds of independent quality review, four fixers applying corrections, eight upgraders refining the routing layer, three prompt testers validating 55+ queries across five personas, four gap-fillers covering remaining directories, and three final critics running integration tests. In total, more than 50 specialized tasks were orchestrated in a single session.

The result: AI agents now have structured navigation guides for 100% of code modules—up from 5%—covering all 4,100+ files across three repositories. The knowledge layer is model-agnostic, meaning it works with most leading AI models.

What This Means for AI-Assisted Development

The system's self-maintaining nature is a key innovation. Automated jobs run every few weeks to validate file paths, detect coverage gaps, re-run quality critics, and auto-fix stale references. The AI isn't just a consumer of this infrastructure—it is the engine that runs it.

"This isn't just about fixing our own pipeline," Holt said. "It's a blueprint for any organization with complex, multi-language codebases where critical knowledge is scattered across teams. We've shown that AI can map that knowledge automatically and keep itself up to date."

Industry analysts see broader implications. "Meta has demonstrated a way to bridge the gap between human tribal knowledge and AI-driven development," said technology analyst Dr. Mark Chen. "If this approach can be scaled and generalized, it could fundamentally change how enterprise AI coding assistants are built." The preliminary 40% reduction in tool calls per task suggests developers can expect faster, more accurate AI assistance—and fewer silent bugs.